Smarter AI with DataForce by TransPerfect

DataForce is a global leader in data augmentation and annotation services combining secure, flexible, and scalable delivery models with the world’s most powerful annotation and resource management platforms.

- Audio collection & annotation

- Text collection & annotation

- Image collection & annotation

- Video collection & annotation

- Chatbot localization

Whether you're developing speech, NLP, computer vision, or conversational AI, we can collect and annotate data for you using the most streamlined processes and tools to complete your data set, reduce bias, and add labels.

- Quality assurance

- Program and project management

- Customer-first approach

- Integration with multiple platforms

Both data collection and enrichment can happen in our proprietary, secure mobile and web-based tools, but we also regularly use our clients’ platforms.

- 1,000,000 annotators

- 250 languages

- Specialized annotators by industry

- Data collection and annotation

- User studies and experience testing

Security is a top priority at TransPerfect. With a certified private infrastructure, your confidential data is secure and protected.

- GDPR compliance

- ISO 27001 certification

- SOC 2

- HIPAA

- Implementation of customer-specific policies

Smarter AI in every language

DataForce can collect custom voice data in more than 200 languages. Our demographically diverse, carefully screened crowd can provide audio recordings on the platform of your choice, including more than 40 mobile phone brands. We can also work with custom and proprietary devices.

After collecting the data, we review the quality and then categorize, transcribe, and deliver it to our clients through well-defined and accessible data flows.

Our machine learning-powered workflows can anonymize the audio before it’s exposed to the transcribers to add an extra security mechanism.

For more information, take a look at our case studies describing a few of our audio recording and transcription projects.

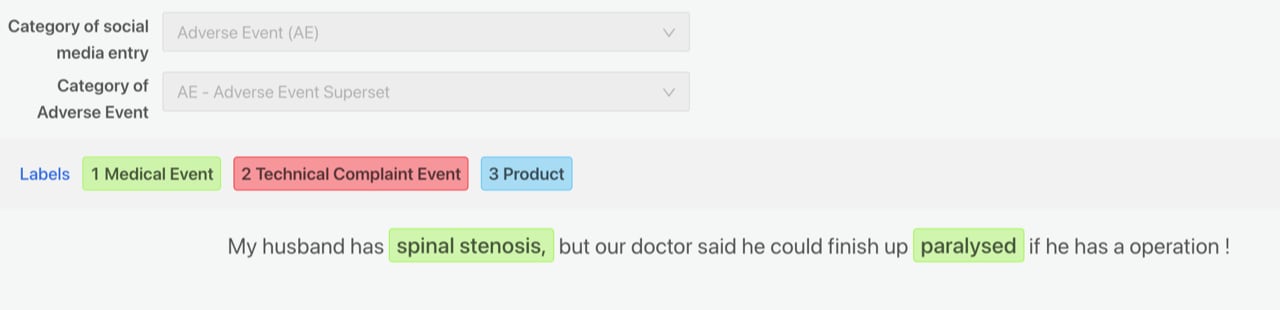

To develop the services, products, and experiences of the future, organizations need to leverage their text data. This includes documents, reports, emails, and other sources of structured or semi-structured data. Whether you're developing precision medicine, risk management, or conversational AI solutions, being able to train your models on accurately labeled data is the most reliable path to a successful deployment.

Our expert, trained annotators have access to state-of-the-art annotation tools. Combined with fine-tuned KPIs and quality assurance processes, we provide best of breed annotations in any domain or industry.

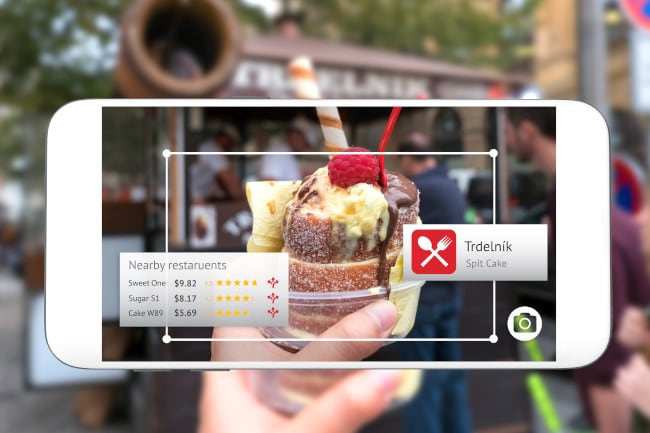

Computer vision is one of the most popular and rapidly adopted AI technologies. DataForce has developed a list of specialized services and technologies to support your innovative applications, including:

- Collection of custom images and videos representing versatile demographics, all possible locations (indoors or outdoors), different options of hardware, and sensors for a number of applications such as consumer products, mobile apps, medical devices, self-driving cars, or consumer behavior monitoring

- Image and video annotation with bounding boxes, semantic segmentation, 3D objects, and LIDAR

- Image and video captioning, always using the right grammar and style with consistency across use cases and languages

All of our capabilities in the computer vision space have something in common:

- Excellent customer service

- Secure workflows

- Full transparency regarding privacy and quality procedures

- Carefully vetted and trained annotators

- Optimal quality/price ratio

DataForce has developed a unique, end-to-end localization process adapted to the needs of conversational systems. From high-quality training data and grammar development to transcreation of system responses, we ensure that your chatbot or virtual assistant will be:

- Fluent

- Brand-specific

- Culturally sensitive

- Compliant with all necessary regulatory requirements of your target markets

In addition to our data and linguistic services, our user experience and testing services will help you deploy your conversational AI solution with confidence in any market or environment—including cars, home appliances, websites, or mobile apps.

Many organizations have deployed human-in-the-loop (HITL) to help advance their products, features, and search algorithms. This process involves collecting inputs and measurements from humans to train, measure, and optimize the relevance and quality of results for end users and to create a better user experience.

Improving and training search algorithms relies on many relevance signals and requires vast amounts of training data. This is especially true when products are international and support many languages and markets. These human evaluators label, annotate, or rate high volumes of search queries with their corresponding results to generate meaningful insights that help improve search algorithms' relevance standards and quality.

DataForce manages this type of work on a secure platform using a vetted community of human evaluators.

Besides search relevance, our service expands to ads relevance, recommendation relevance, and other types of content moderation.